Usability Testing an AI Sales Assistant

Role

UX Architect

Organization

Sanas AI

Duration

1 month

96.9%

Assistant Activation Success

82.8%

Found speech prompts useful

70%

See clear sales benefits

50%

Faster testing with Maze

Role Clarity

As a UX Architect, my contribution was establishing the research framework, selecting and configuring Maze as the testing platform, reviewing the study structure, overseeing testing with participants, and synthesising findings into actionable recommendations. The product was ultimately shelved due to an executive decision unrelated to research outcomes.

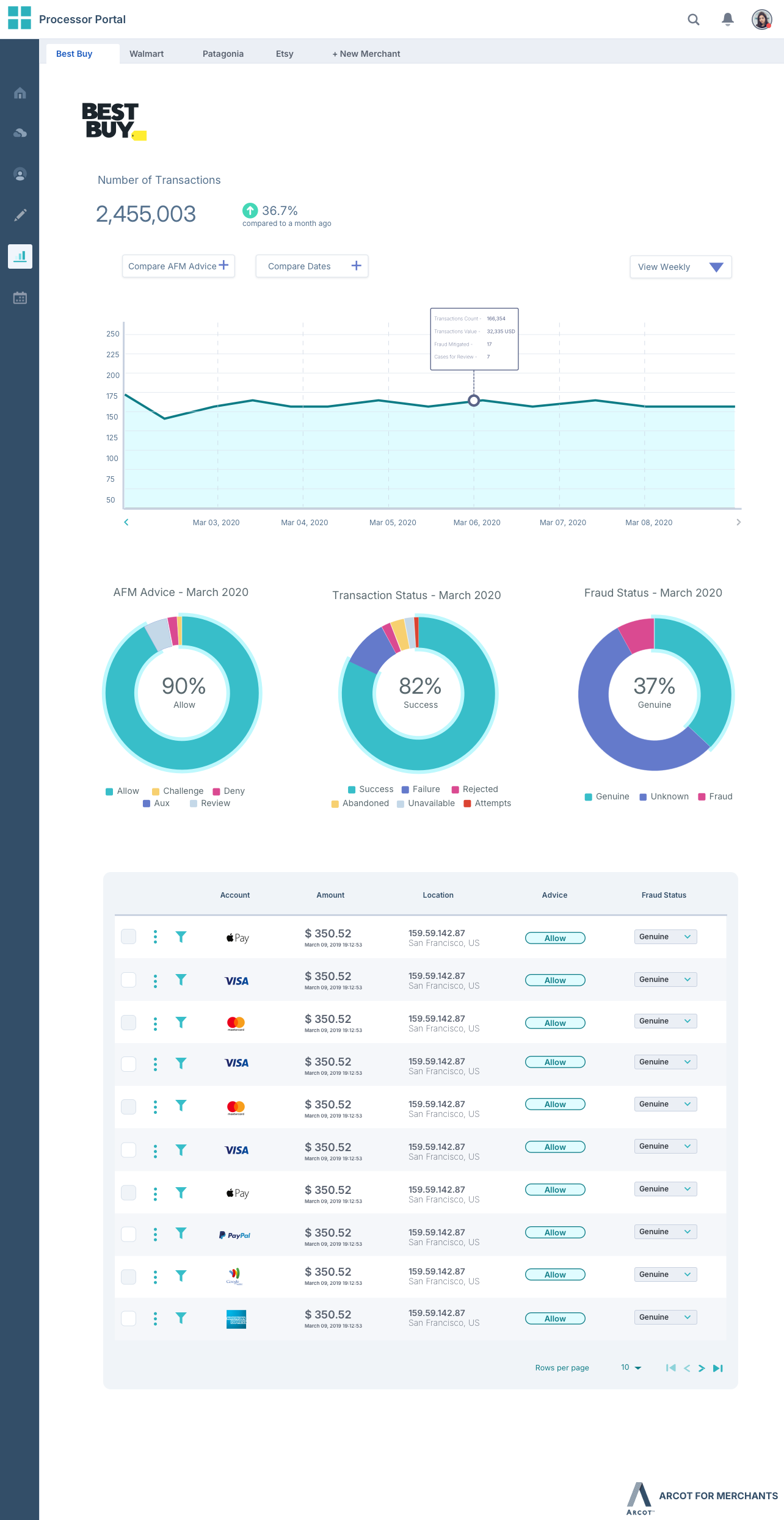

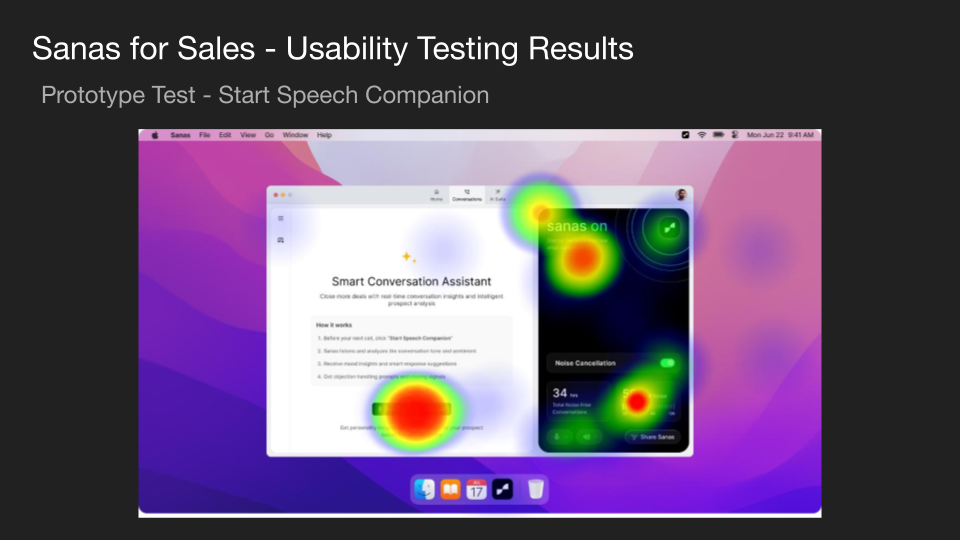

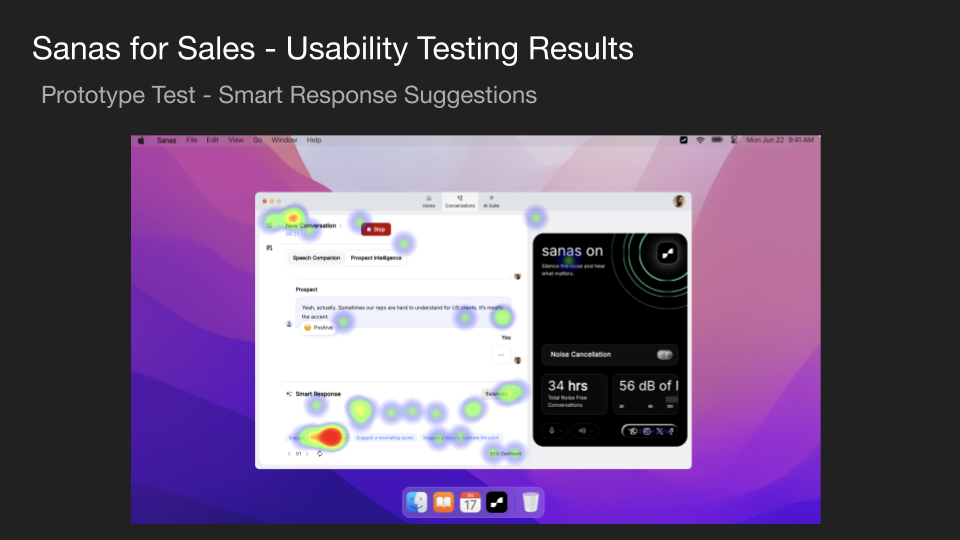

The Product

Sanas for Sales is an AI-powered desktop application for enterprise sales teams. It listens to live sales calls, analyses conversation tone and sentiment in real time, and surfaces smart response suggestions, objection handling prompts, and prospect intelligence — all while the call is in progress.

Research Approach

Goal: Validate three key design decisions — usability of core workflows, visual hierarchy, and effectiveness of AI-powered features.

Method: Unmoderated usability testing via Maze with 20 participants aged 30–50.

Maze was selected over traditional moderated sessions to cut the testing cycle from 10 days to 5 days — a 50% reduction.

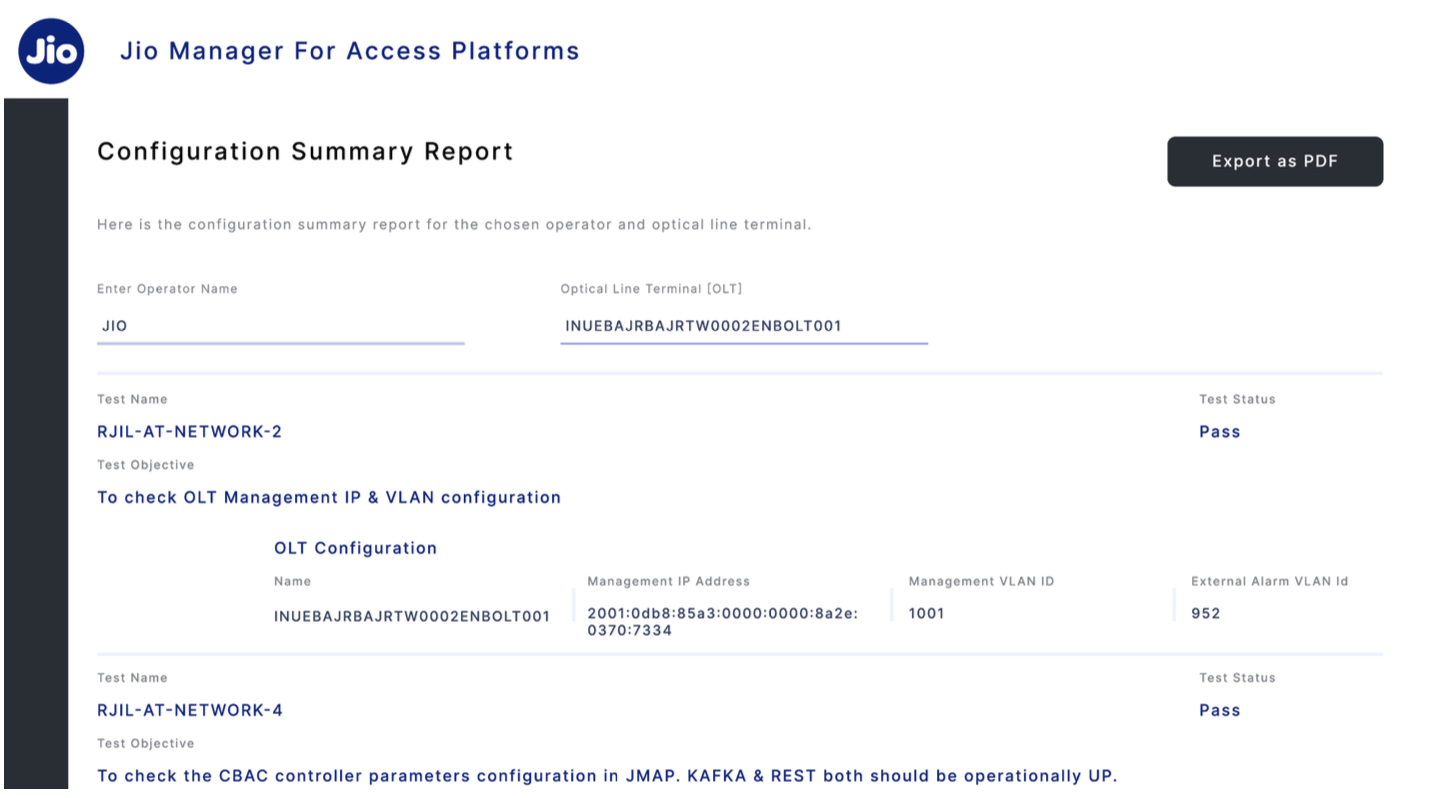

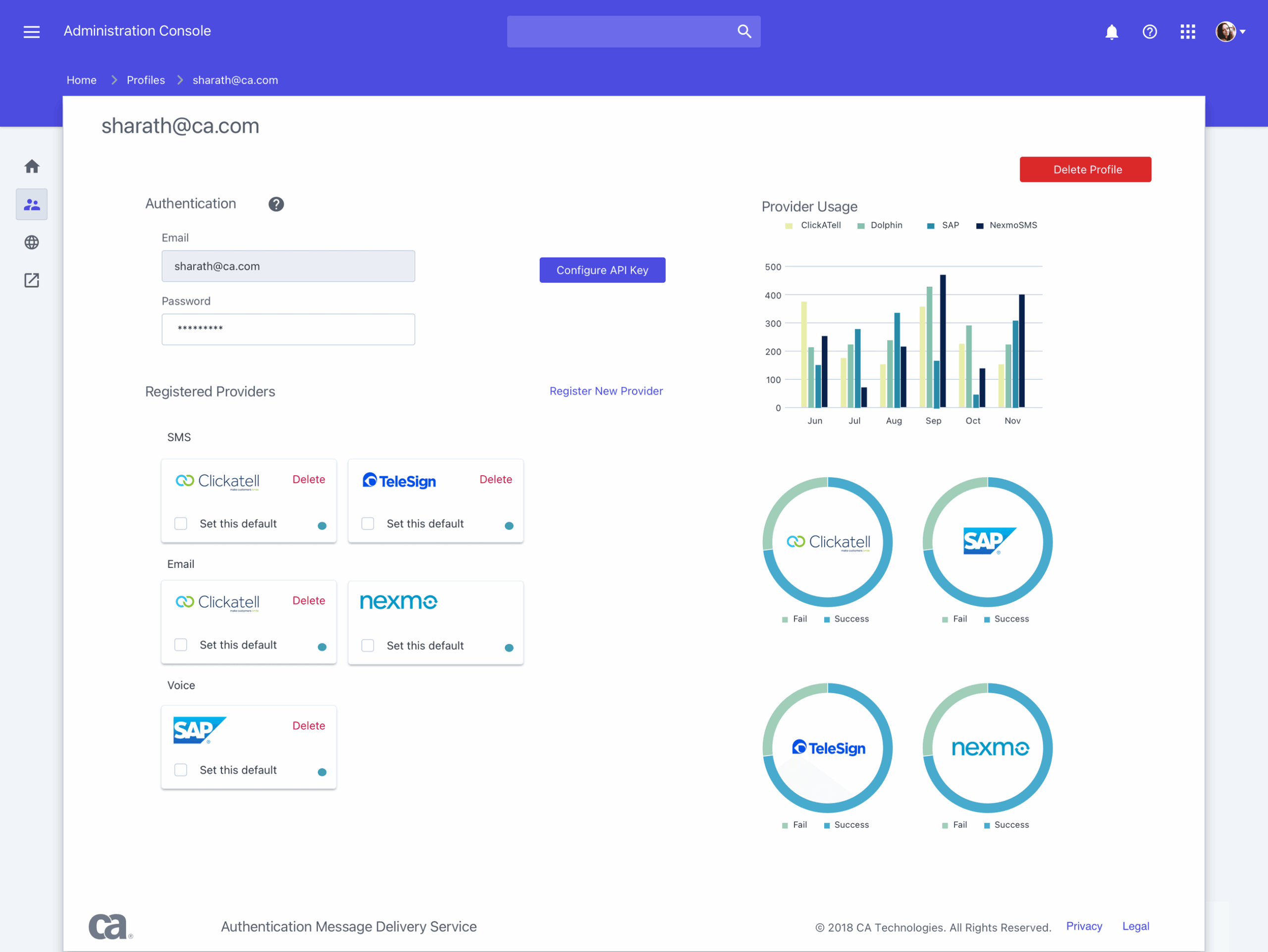

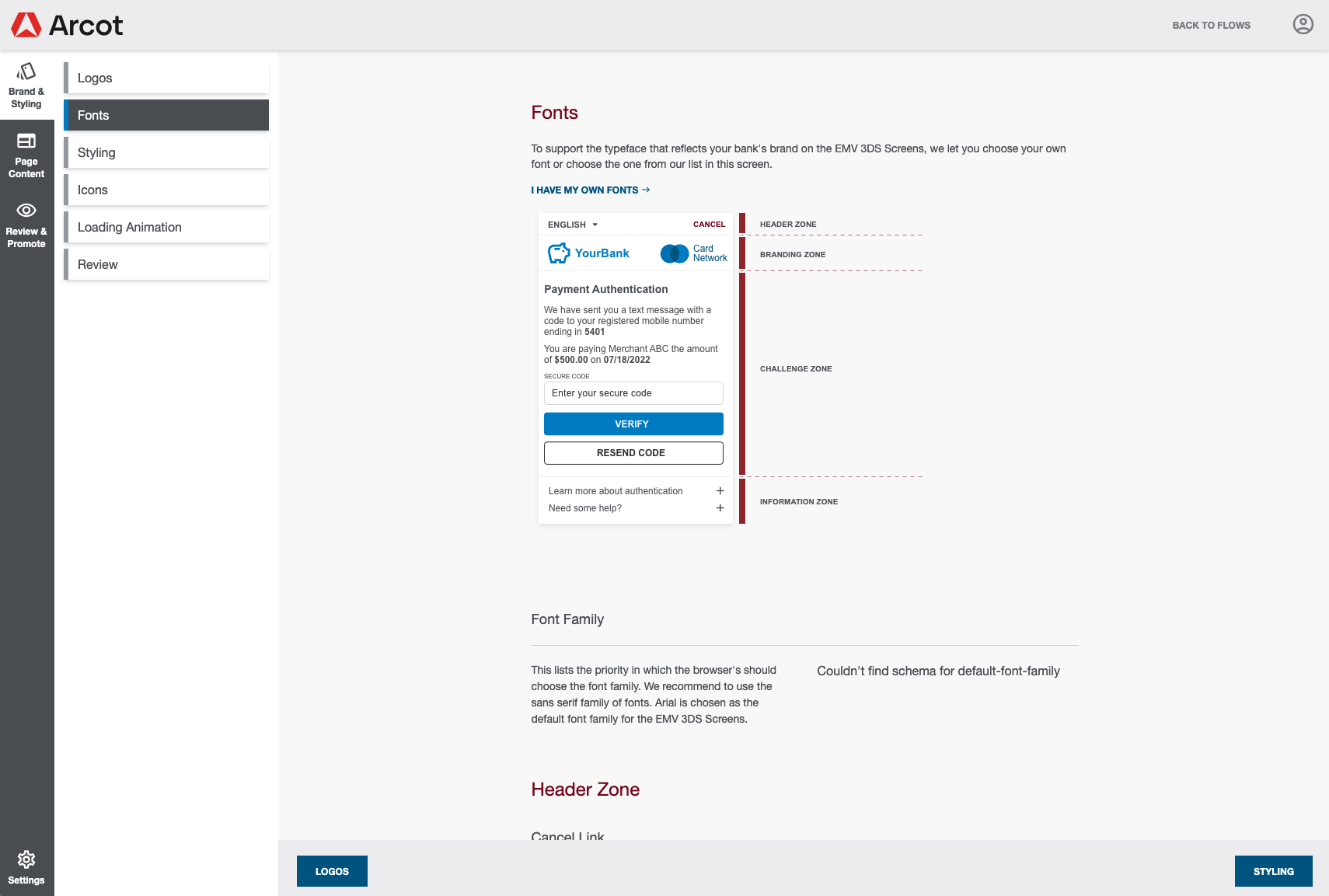

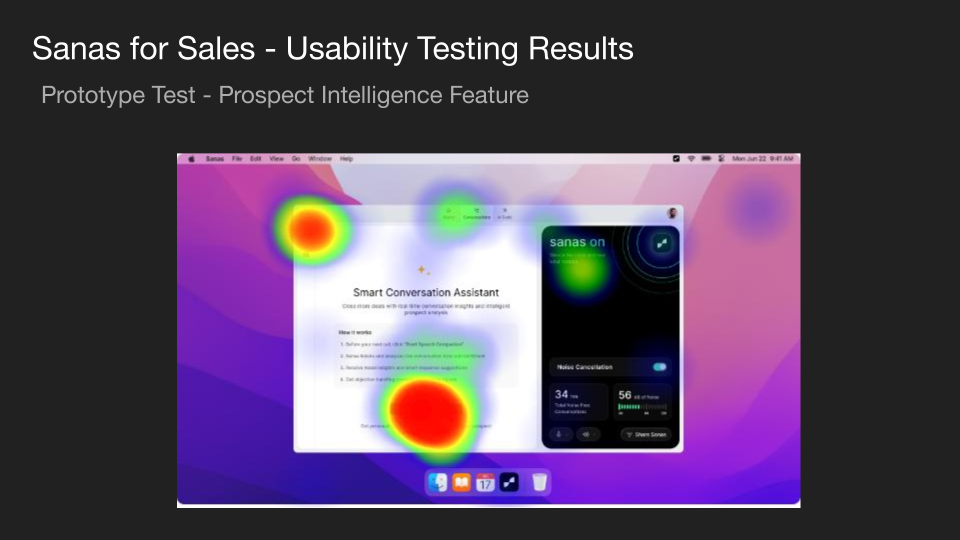

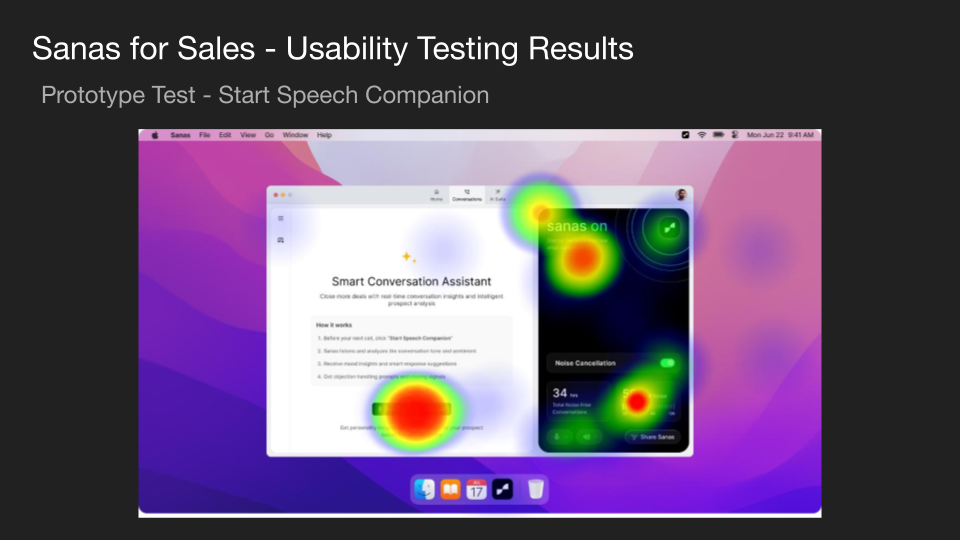

Prototype Testing - Heatmaps

Three key tasks were tested. The heatmaps below show where users looked and clicked during each task.

Prototype Test Results

Task

Success Rate

Drop-off

Misclick

Average Duration

Start Assistant

96.9%

3.1%

75.9%

21.1s

Speech Prompts

69.0%

31.0%

94.2%

33.0s

Profile Analysis

71.0%

14.0%

57.0%

30.0s

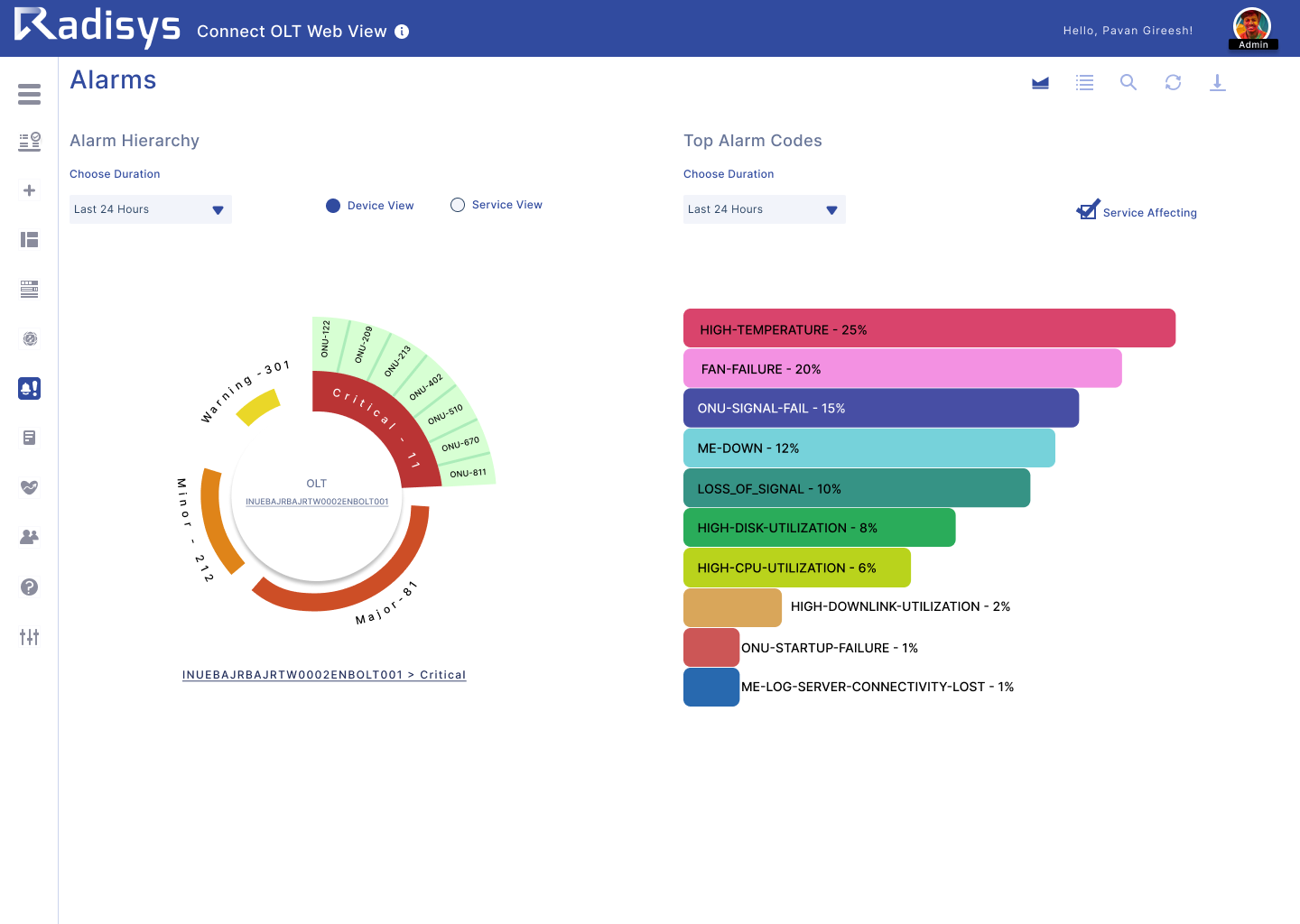

Key Findings

Task

Success Rate

Drop-off

- 70% see clear sales benefits.

- Real-time suggestions mentioned positively in ~40% of responses.

- High enthusiasm for AI-guided objection handling.

- Privacy concerns in ~35% of responses.

- Reliability concerns in ~30%.

- High misclick rate on Speech Prompts (94.2%) signals discoverability issues.

- Onboarding needs clarity — multiple users found it 'confusing.'

- Speech Prompts entry point needs stronger visual affordance to reduce misclicks.

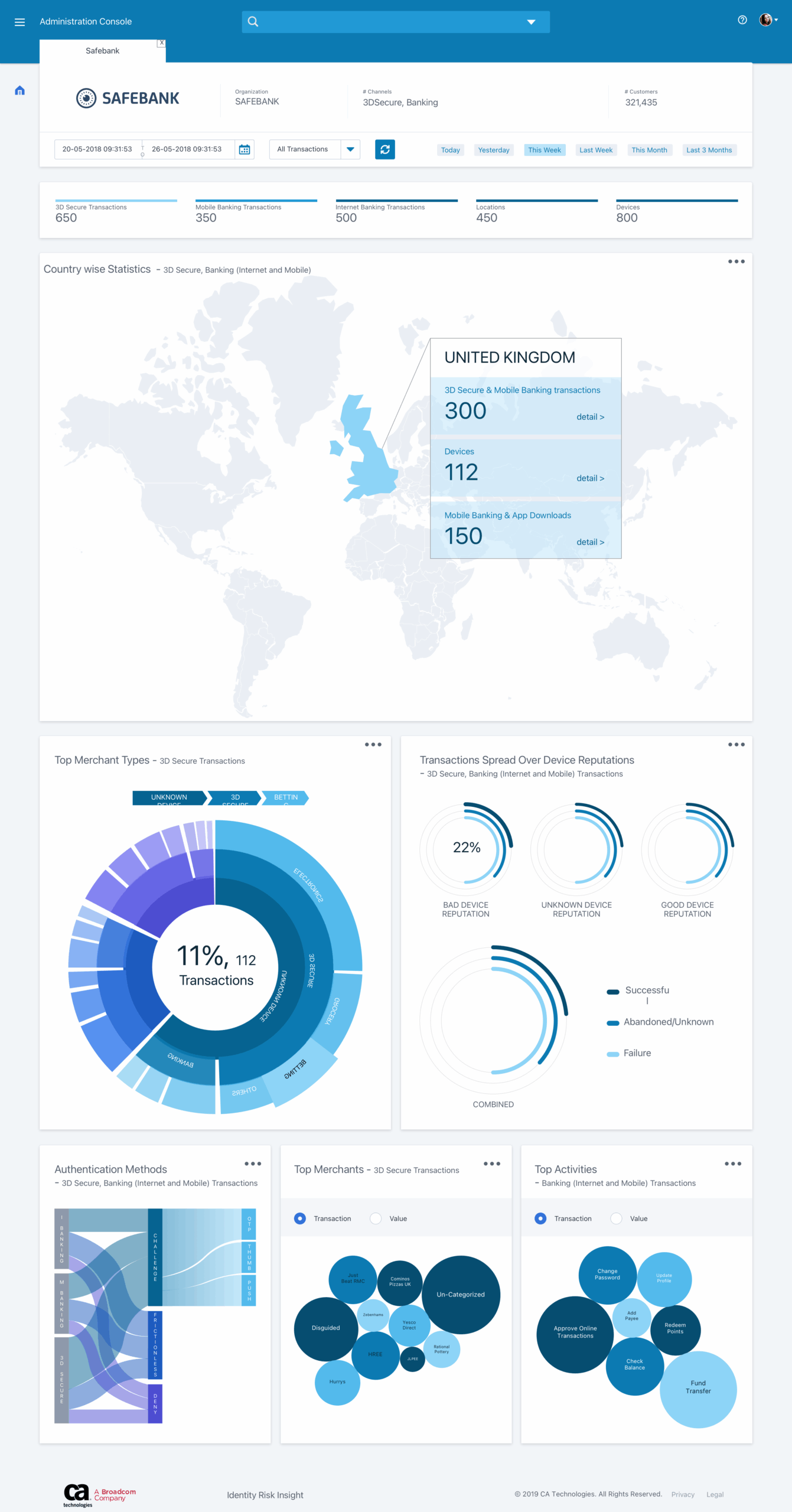

Open Question Highlights

82.8% found speech prompts useful. However, 58.6% felt the prompts sounded natural — meaning ~40% had reservations. Use as-is vs. tweak responses were split almost evenly (14% as-is, 45% tweak), confirming the prompts needed refinement before launch.

Outcome & Learning

The research validated core functionality but surfaced real friction in the Speech Prompts flow — a 94.2% misclick rate is a clear signal that the entry point needed redesign before launch.

The product was shelved due to an executive decision unrelated to usability findings. The key takeaway for me: establishing a structured research process with Maze reduced testing cycle time by 50% — a framework I've carried forward. Strong research doesn't guarantee launch; it guarantees informed decisions.

Selected Works